March 2020 was a whirlwind. When COVID-19 was first discussed in the news, many of us had no idea what the impact would be. Some of us assumed we would be back to the usual routine by the time spring quarter began (myself included)—we couldn’t have been more wrong.

The before times

At my institution, I teach introductory nutrition in both a traditional face-to-face format as well as a fully online version of the course. This course is a high-enrollment, general education course geared for non-science majors and regularly enrolls around 500 students in the face-to-face course and between 700 to 900 students in the fully online course. Prior to the pandemic, students in the face-to-face course took three non-cumulative exams in-person, while the students in the virtual course took their exams on our Learning Management System (LMS) using a virtual proctoring company. Since 2018, I worked closely with the virtual proctoring company and my teaching assistant team to ensure we had a robust protocol for exam day, including troubleshooting any technical issues (they always came up), fast communication with distressed students, “on-call” scheduling with the team, how to handle make-up exams, and more. Through many quarters of trial and error, our team was able to establish a relatively smooth exam-taking experience for our students.

However, the concept of virtual proctoring was always something that invoked fear in students. There were concerns over privacy, anxiety about the unfamiliarity, and inequity with access to technology since students needed to have a strong Wi-Fi connection, working webcam/microphone, and were unable to use tablets/Chromebooks due to software requirements. The format of a closed-book, timed exam (45-60 minutes) in a high-enrollment class also left little room for questions that could demonstrate critical thinking skills.

Re-thinking assessment

The pandemic encouraged me to re-think the way we can assess student learning in large general education courses. Last spring, the proctoring centers had to close down, and live proctoring with a person at the other end was no longer an option. There was an automated system in place, but there were technical difficulties with the system. I was faced with a decision and felt trying to navigate this new system with over 1,200 students enrolled in the course during a pandemic was not feasible. Thus began my pivot towards open-book exams.

One of my colleagues from graduate school had asked me a couple of years ago about the decision to do proctored exams online (she does open-book, online exams). At the time, I felt proctored exams were the gold standard way of maintaining academic integrity. However, I could feel myself growing tired of the close-ended questions consistently produced on my exams and felt the exam material didn’t entirely reflect my objective of the class. I tell students on the first day that my goal is to give them the tools to be able to make at least one small health improvement. So, what if they couldn’t list all of the enzymes involved in carbohydrate digestion—I wanted them to be able to describe why they were or weren’t at risk for heart disease and how they could improve upon their eating pattern, physical activity habits, and lifestyle choices.

Designing an open-book exam

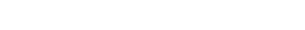

Writing an open-book exam was not an easy feat. It took countless hours of question development and seeking feedback from educational specialists, as well as my teaching assistant team. I’ve been doing the open-book exam format for a few quarters where each exam has multiple question banks (>25) consisting of a combination of open-ended, short answer questions, check-all-that-apply, fill-in-the-blank, drop-down menus, and some multiple choice. I now have two non-cumulative exams (instead of three) that are each designed to be an hour in length, but students are given a three-hour time block to take the exam. In announcements and review sessions, I go over the expectations of the open-book exams and have them agree to and sign the honor code with their digital signature worth one point on the exams.

Honor code from the exams:

The aftermath

Yes, the grading load has increased compared to the automated proctored exams. But, going completely online has also removed some of the in-person responsibilities our instructional team used to do, such as in-person proctoring.

Another question that comes to mind with open-book: Is everyone getting an A? When I did a comparison between fall 2019 and fall 2020, I found the average for exam #1 was 84.5% and 85%, respectively. Although we still see some issues of academic integrity, most of these were because students had different expectations about the open-book exam. Once we were able to discuss the situation in office hours, we moved forward and utilized the conversation as a learning experience. Generally, students took the exam instructions seriously and even closed down their communication channel on discord for exam days at my request. My team and I have been pleased to see the demonstration of critical thinking skills and the thoughtful answers students write on their exams. In the real-world, students will be able to use resources to do their jobs, and the open-book exam style presents a situation where students have to synthesize the information and recognize what credible sources they can use.

Some guidelines for preserving academic integrity with open-book exams:

- Recognize that you can’t enforce students to follow the honor code; you’ll need to trust them to follow exam expectations

- Set a clear guide to what the exam rules are and what resources are allowed

- Provide consistent reminders throughout the course

- Mute the student-run communication channel (i.e. discord) on exam day

- Google search for course materials being leaked

- Develop multiple versions of a question

- Conduct continual updates of course content to create new questions

- Incorporate open-ended questions where students all can’t write the same answer

Structured open-ended question example:

This past quarter I collected feedback from students about the open-book exams. Students appreciated the flexibility and extra time which helped them feel less pressured and able to think clearly. One student wrote, “I preferred the open book style. It makes the exam a lot less stressful and focuses on us learning rather than memorizing.” Another student wrote, “The open-book format was great in that it didn’t make the course a memorization course. Even though it was open book, it still tested our understanding of the topics we learned about, which does reflect well on my knowledge gained.” Students were also open to the questioning style, with a student writing, “I liked the variety of questions from short answer to multiple choice allowing me to apply what I’ve learned in different ways. The open book format was certainly helpful and I prefer it.” In the feedback, students also recounted their experience with using a proctoring system in their other courses and expressed feeling uncomfortable, stressed, and overall a “horrible experience.”

Moving forward

Every course is unique and has different learning goals. Open-book exams aren’t for all courses and the addition of short answer questions increases the grading load, which may not possible to handle. But, this experience has opened my eyes to the various ways we can assess student learning in high-enrollment courses. So, where do we go from here? For my own class, I’ll be continuing to experiment with new questions and perhaps even consider restructuring “exams” into take-home assignments. As another student wrote, “Open book is cool, it’s more flexible.” I haven’t heard the word “cool” be used in association with an exam yet, so I think this could be a good sign.

Debbie Fetter, PhD, is an assistant professor of teaching in the department of nutrition at UC Davis. She teaches “Nutrition 10: Discoveries and Concepts in Nutrition” in both a face-to-face format and a fully online version. In addition to teaching, she conducts research on investigating differences between face-to-face and online education.